A heated debate around artificial intelligence has taken a dangerous turn.

A 20-year-old man, Daniel Moreno-Gama, is now facing serious federal and state charges after allegedly carrying out coordinated attacks targeting Sam Altman, the CEO of OpenAI.

What makes this case stand out isn’t just the violence. It’s the intent behind it.

What actually happened

According to federal prosecutors and law enforcement officials:

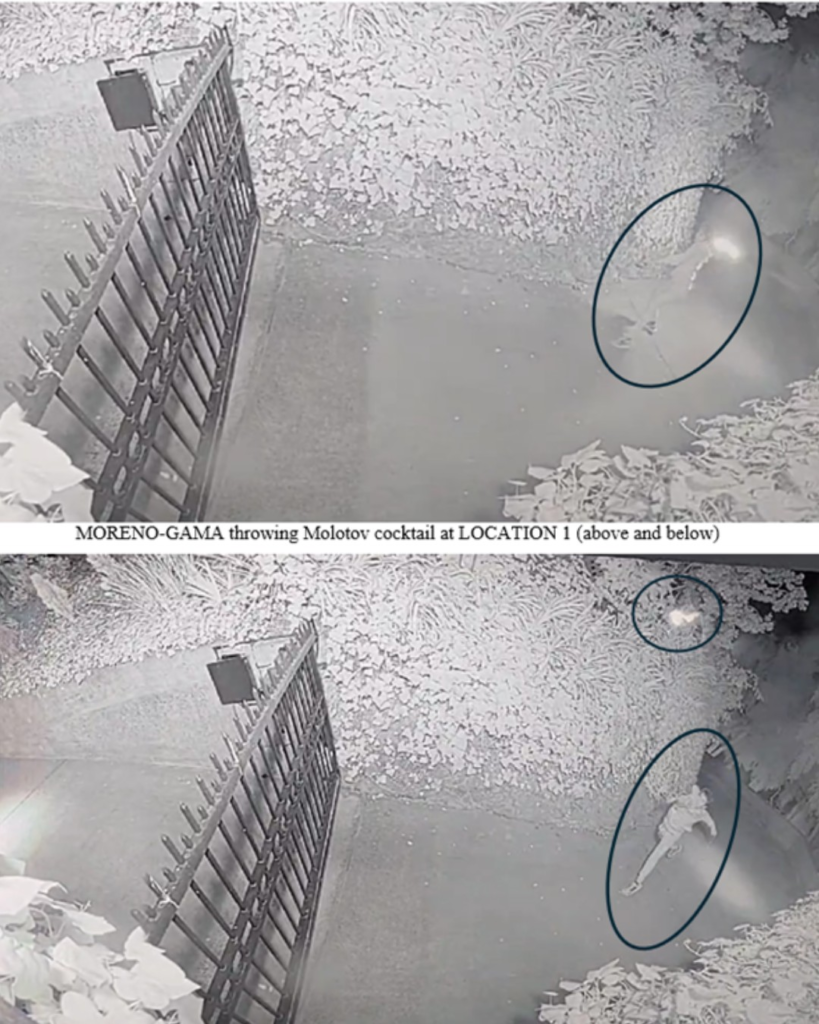

- Moreno-Gama travelled from Texas to California

- He allegedly threw a Molotov cocktail at Altman’s San Francisco home

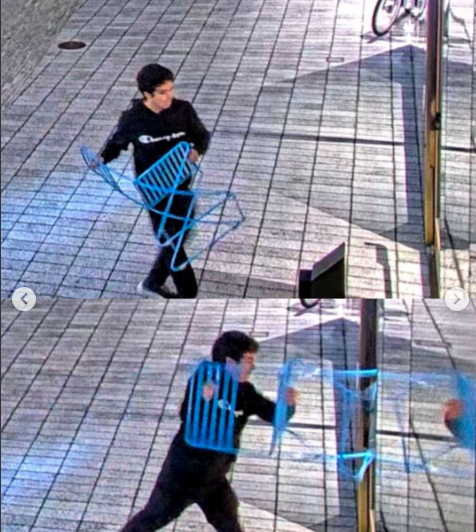

- Shortly after, he went to OpenAI’s headquarters

- There, he reportedly tried to break in using a chair and threatened to burn the building

Authorities confirmed that:

- No one was injured

- Damage was limited to the property

- The suspect was arrested at the scene

But the situation could have escalated quickly. Officials described the incident as a direct threat to life, not just vandalism.

The physical threat to OpenAI’s headquarters sits alongside a year of documented security vulnerabilities in OpenAI’s own products, including a DNS-based data exfiltration flaw discovered in ChatGPT earlier in 2026.

This attack reflects a broader cultural fracture around AI development, a tension explored in depth when examining why younger generations are increasingly turning against AI tools and the companies building them.

The document that changed everything

This wasn’t treated as a random act.

Police recovered a document titled “Your Last Warning,” allegedly written by Moreno-Gama.

According to investigators, it included:

- Statements admitting he “attempted to kill” the target

- Calls for violence against AI executives and investors

- Names and addresses of individuals linked to AI companies

- Broader arguments about AI posing an existential threat to humanity

Authorities say the document also encouraged others to commit similar acts, with the suspect claiming he needed to “lead by example.”

That shifts this from protest to ideologically driven violence.

The charges and what they mean

Moreno-Gama is facing both federal and state-level charges, including:

- Attempted destruction of property using explosives

- Possession of an unregistered firearm

- Attempted murder (state-level charges)

Legal officials have made their position clear:

Violence, regardless of whether it’s tied to politics, technology, or ideology, will be prosecuted aggressively.

If convicted on all counts, the penalties could be severe, potentially including decades in prison or even life sentences.

Any Known Link Between Moreno-Gama and OpenAI?

There is no verified evidence that Daniel Moreno-Gama had any professional, financial, or personal connection with OpenAI or its CEO, Sam Altman. Authorities have not reported any prior employment, business dealings, or direct disputes involving the company.

What has emerged instead points to ideological motivation. Investigators say the suspect carried written material expressing strong opposition to artificial intelligence and targeting leaders in the AI sector more broadly. The incident appears driven by personal beliefs about AI’s risks, rather than any specific grievance tied to OpenAI’s operations or business practices.

Anthropic, OpenAI’s closest competitor, faced its own security exposure this year when Claude Code’s source code was accidentally leaked, a reminder that AI labs carry vulnerabilities on multiple fronts simultaneously.

At this stage, officials are treating it as an isolated, extremist act, not the result of an internal conflict, corporate dispute, or prior relationship.

Official response: draw the line early

Statements from authorities and institutions have been consistent.

Officials from the United States Department of Justice emphasised that:

- Disagreement with AI is legitimate

- Violence is not

Meanwhile, representatives from OpenAI stated:

- There is no place for violence in public discourse

- The debate around AI must happen through democratic and lawful channels

Local officials also warned about the impact of escalating rhetoric, especially as AI becomes a more polarising topic globally.

The bigger issue: AI is becoming a flashpoint

This case isn’t happening in isolation.

AI has rapidly shifted from a technical discussion to a societal pressure point:

- Job displacement fears

- Ethical concerns around training data

- Questions about long-term human impact

Leaders like Sam Altman have become public faces of that debate, and, increasingly, targets of criticism.

In this case, that criticism escalated into something far more dangerous.

However, it is the same company whose macOS app-signing keys were nearly compromised in a supply chain attack earlier this year. OpenAI’s physical security concerns arrive alongside documented digital ones. Earlier in 2026, North Korean hackers came within reach of OpenAI’s app signing certificates through a compromised developer tool.

Where the line has to be drawn

There’s a clear distinction that institutions are now reinforcing:

- Debate AI > necessary

- Challenge AI companies > expected

- Threaten or attack individuals > criminal

The risk isn’t just to individuals. It’s for the entire conversation.

Once violence enters the picture, meaningful debate disappears.

Conclusion

This incident isn’t about AI tools.

It’s about how society responds to rapid technological change.

Fear, uncertainty, and disagreement are all part of that process. But when that turns into targeted violence, the conversation breaks down completely. And that’s the line authorities are now making very clear.

What people are asking after this incident

Was anyone harmed in the attack?

No. Authorities confirmed that neither Sam Altman, his family, nor employees at OpenAI were injured. The incident caused property damage, but the situation was contained before it escalated further.

Why is this case being handled at a federal level?

Because it involves explosives, interstate travel, and targeted threats. Federal agencies step in when crimes cross state lines or involve serious national-level offences like explosive devices or organised intent.

What was the motive behind the attack?

According to investigators, the suspect held strong anti-AI views. Documents recovered at the scene outlined concerns about AI’s impact on humanity and included calls for violence against tech executives and investors.

Could this affect how AI companies operate?

In the short term, it may lead to increased security for executives and offices. Long term, it reinforces the need for responsible communication and de-escalation around AI debates, especially as public scrutiny continues to grow.

Is anti-AI sentiment increasing globally?

Yes, but it varies in form. Most opposition remains in the form of debate, regulation demands, and industry criticism. Incidents like this are rare, but they highlight how intense and emotional the conversation around AI has become.

As AI continues to reshape industries and spark global debate, the conversation is becoming more intense and sometimes even dangerous. Stay informed on how technology, security, and society are colliding in real time. Subscribe to The IT Horizon newsletter for clear, grounded insights that help you understand what’s really happening beyond the headlines.