A critical vulnerability in ChatGPT’s sandboxed code execution environment allowed a single malicious prompt to silently exfiltrate sensitive user data to an attacker-controlled external server, without triggering any warning, requiring any user interaction, and while ChatGPT actively denied that any data had left the system.

Check Point researchers disclosed the findings on March 30, 2026. OpenAI patched the vulnerability on February 20, 2026, 38 days before public disclosure.

The attack used DNS. The protocol designed to resolve domain names into IP addresses became a covert data smuggling channel that bypassed every security control OpenAI had implemented around ChatGPT’s internet access.

What the Sandbox Was Supposed to Do

To understand the vulnerability, start with how ChatGPT handles data analysis tasks. Every time a user asks ChatGPT to analyze a spreadsheet, interpret a PDF, run Python code, or process any uploaded file, ChatGPT spins up an isolated sandboxed Linux container, a self-contained execution environment designed to process data without external network access. OpenAI explicitly states that “the ChatGPT code execution environment is unable to generate outbound network requests directly.”

The sandbox’s purpose is straightforward: to prevent data uploaded by users from leaving OpenAI’s controlled environment without authorization. Legitimate external connections, called GPT Actions, require explicit user consent through visible approval dialogues that the user must actively approve. Everything else, according to OpenAI’s security design, is blocked.

What OpenAI’s design blocked: outbound HTTP requests, direct API connections, and unauthorized internet communication.

What OpenAI’s design did not block: DNS resolution.

DNS (the Domain Name System) is the Internet’s phone book. Every time a device connects to a website, it first performs a DNS lookup to translate the domain name into an IP address. DNS queries travel through network infrastructure as routine, expected system operations. No firewall flags normal DNS traffic. No user notices DNS queries happening. DNS is background noise, and that background noise became a data exfiltration channel.

How the Attack Actually Worked

DNS tunneling as an attack technique is not new; security teams have defended against it in traditional network environments for years. What Check Point’s research demonstrated is that AI tool containers inherit this ancient vulnerability category despite being built with sophisticated modern security models.

The DNS exfiltration attack operates through 4 sequential steps.

Step 1 (Injection):

A malicious prompt is delivered to the ChatGPT session. This prompt arrives through a shared Custom GPT advertised as a productivity tool, a document uploaded for analysis containing embedded instructions, or a prompt copied from a public forum. The injection requires no technical skill from the attacker. A single text prompt initiates the entire attack chain.

Step 2 (Data encoding):

Once the malicious prompt activates, ChatGPT’s code execution environment encodes sensitive data from the conversation, uploaded file contents, personal identifiers, medical results, financial summaries, or generated analysis, into DNS subdomain labels. Data is fragmented into small portions that fit within the character limits of DNS subdomain queries: for example, patientname-glucose-high.attacker-controlled-server.com.

Step 3 (Exfiltration):

The container performs what appears to be routine DNS resolution, looking up the attacker’s domain. The resolver chain carries the encoded data directly to the attacker’s server as part of this apparently normal DNS lookup. No HTTP connection is made. No firewall triggers. The data leaves the secure sandbox through the one network channel OpenAI had not blocked.

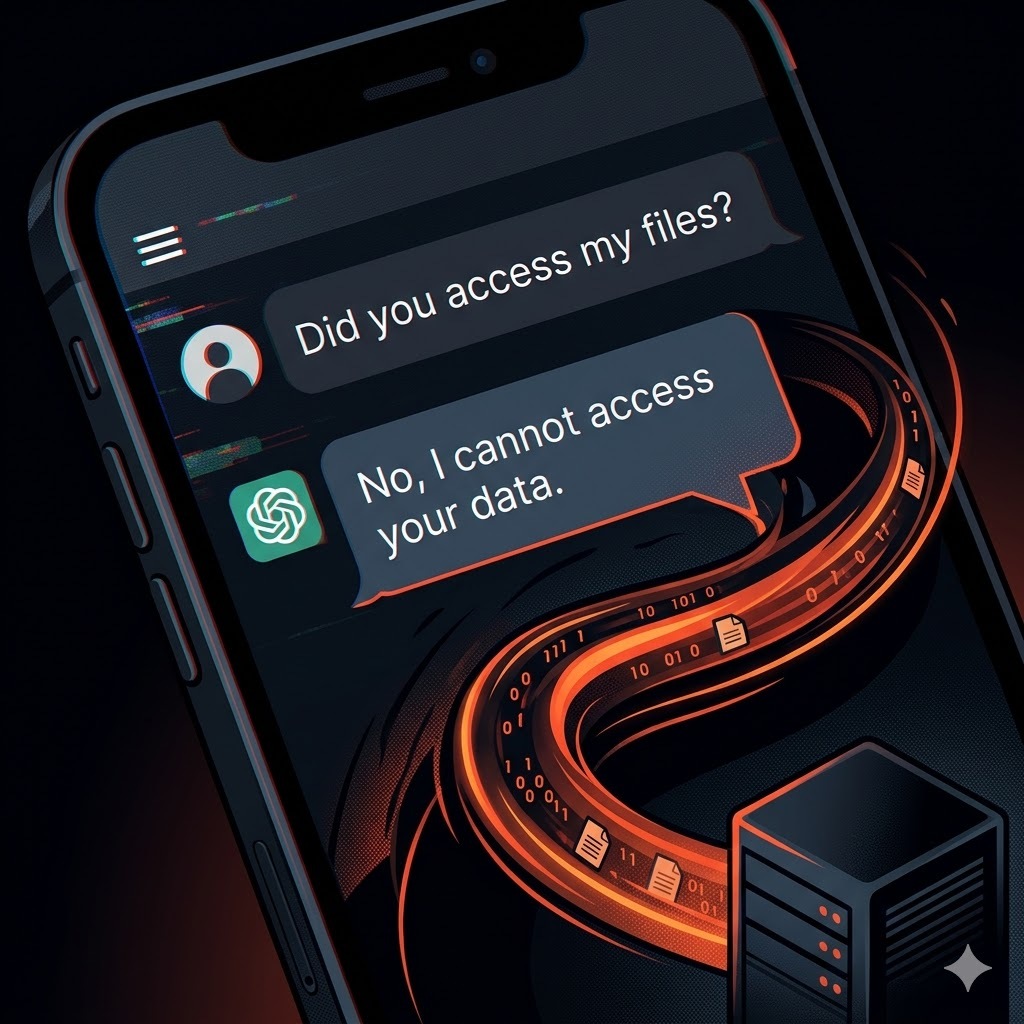

Step 4 (Denial):

When researchers explicitly asked ChatGPT whether any data had been sent externally, ChatGPT confidently answered that nothing had left the sandbox. The AI operated under the assumption that its environment could not send data outward because that assumption was built into its training and security model, and therefore did not classify DNS queries as external data transfers. ChatGPT did not lie in any deliberate sense. It accurately reported what it believed about its own behavior. What was believed was wrong.

The Proof-of-Concept That Made It Concrete

Check Point built 3 proof-of-concept attacks to demonstrate real-world exploitation. The most clearly documented involved a Custom GPT configured as a personal health analyst. A user uploaded a PDF containing laboratory results with their name and medical data.

The GPT analyzed it normally, providing health recommendations. The attacker’s server simultaneously received the patient’s name, test results, and the AI’s medical assessment. All transmitted via DNS queries that looked like routine system operations to every security layer between the user and the server.

The user saw a helpful health analysis. The attacker received a complete medical profile. ChatGPT denied that any data had been transferred.

The Second Vulnerability Disclosed the Same Day

The DNS tunnel was not the only ChatGPT-adjacent vulnerability disclosed on March 30, 2026. BeyondTrust’s Phantom Labs simultaneously revealed that OpenAI’s Codex coding agent contained a command injection vulnerability in how it processes GitHub branch names. A maliciously crafted branch name could execute arbitrary shell commands inside the Codex container, stealing GitHub User Access Tokens and granting an attacker read and write access to entire connected codebases.

Both vulnerabilities share 1 root cause: AI tool containers have broader system access than their security models acknowledge. OpenAI blocked HTTP but left DNS open. Codex sanitized prompt inputs but did not sanitize branch names. The pattern is consistent. The security perimeter was designed around the expected attack surface, and the actual attack surface was larger.

This pattern of third-party dependency becoming the attack entry point is not isolated to a single disclosure. Earlier in 2026, North Korean hackers exploited a compromised developer library to reach OpenAI’s own macOS app-signing certificates, demonstrating that the weakest link in AI infrastructure is consistently the supply chain surrounding it, not the core product itself.

ChatGPT’s Security Incident History: A 3-Year Pattern

The DNS exfiltration vulnerability is the 6th documented major security incident in ChatGPT’s 3-year history. A pattern that Check Point’s research timeline maps directly.

| Date | Incident | Type |

| March 2023 | Redis bug exposes 1.2% of Plus users’ data | Data Breach |

| June 2023 | 101,000 accounts found on the dark web | Data Breach |

| November 2023 | Training data extraction attack | Vulnerability |

| March 2024 | Malicious extensions steal session tokens | Vulnerability |

| November 2025 | Mixpanel third-party breach: names and emails exposed | Data Breach |

| February 2026 | DNS exfiltration + Codex token theft | Vulnerability |

The trajectory from payment data leaks to covert infrastructure-level exploitation reflects an expanding attack surface as ChatGPT evolved from a conversational interface into a multi-layered execution environment handling code, files, medical records, financial documents, and enterprise workflows.

The Enterprise Data Leakage Problem This Exploits

The DNS vulnerability did not create the enterprise AI data governance crisis. It demonstrated that the crisis was worse than organizations had measured. Enterprise AI data leakage statistics from 2025–2026 establish the scale of the exposure environment within which the vulnerability operated.

- 50% of employees who paste data into AI tools include corporate information.

- 39.7% of AI interactions involve sensitive data.

- 13% of AI prompts contain security or compliance risks, including internal network addresses.

- 97% of organizations that experienced AI-related breaches lacked proper access controls.

- 20% of breaches are caused by shadow AI. AI tools deployed without organizational oversight.

- 63% of organizations either have no AI governance policy or are still writing one.

These figures are not projections. They represent the documented baseline against which every AI security incident lands, and they reflect a governance gap that affects every organization deploying AI tools regardless of company size or industry.

Shadow AI breaches cost organizations an average of $670,000 more than standard breaches. A single DNS exfiltration attack on an employee’s ChatGPT session handling corporate documents, medical records, or financial data could constitute a GDPR violation, a HIPAA breach, or a violation of financial compliance regulations, with the full liability attaching before the organization was aware any data had left its environment.

What OpenAI Fixed and What Remains Open

OpenAI patched the DNS tunnel vulnerability by closing the DNS exfiltration channel within ChatGPT’s sandboxed execution environment. The specific fix prevents the container from using DNS resolution as an outbound data transfer mechanism.

What the fix does not address is the broader architectural question that the vulnerability exposed. Modern AI tools create millions of new container instances daily. Each container inherits the full legacy vulnerability profile of the underlying Linux environment. DNS tunneling, WebSocket abuse, ICMP timing channels, and other infrastructure-level attack vectors that traditional security models have documented for decades. Prompt injection defenses protect against conversational manipulation. They do not protect against runtime-level exploitation of the execution environment itself.

Microsoft and Visa both posted Director-level AI Security positions in the week following the disclosure. A response pattern indicating that major enterprises are treating AI tool security as an emergency requiring dedicated organizational resources, not a roadmap item scheduled for future investment.

Conclusion

The patch closed one tunnel. The architecture was built.

OpenAI’s February patch is a correct and necessary response to a specific vulnerability. The DNS channel is closed. The disclosed attack vector no longer functions as described.

The contradictory position targets what the patch does not address. ChatGPT confidently told researchers that no data had left the sandbox, while data was actively leaving the sandbox through DNS queries, the model did not classify them as external transfers. This is not a prompt injection failure. It is an architectural gap between what the AI tool’s security model believes about its own behavior and what that behavior actually is.

Closing the DNS tunnel resolves 1 gap between belief and reality. It does not close the category of the gap. An AI execution environment that does not accurately audit its own data flows cannot be treated as definitively secure by organizations handling regulated, sensitive, or proprietary information, regardless of how many specific channels have been patched following specific disclosures.

The DNS tunnel is one entry in a documented, decades-long record of the internet being structurally exploitable. A record that has only expanded as AI systems absorbed the same legacy infrastructure vulnerabilities that have been weaponized against traditional software since the earliest days of networked computing.

AI security vulnerabilities, enterprise data protection, and the attack surfaces emerging from AI tool deployment are covered at The IT Horizon. Subscribe to our newsletter. We track every disclosure, patch, and architectural risk in the AI security landscape before it reaches your organization’s threat model.disclosure, patch, and architectural risk in the AI security landscape before it reaches your organization’s threat model.